Churn prediction demo

IBM Telco Customer Churn — trained pipeline, F1-tuned threshold at inference. Try the form below or open /docs for the JSON schema.

About this project

What it does. Contract, tenure, charges, and service flags → churn probability, binary label (F1-tuned threshold), and a risk band.

How it was built. Python: feature engineering, LogReg / Random Forest / Gradient Boosting, best by ROC-AUC. Inference-only deploy — model/churn_model.joblib.

- GET /meta — stack & training summary

- GET /health — model file check

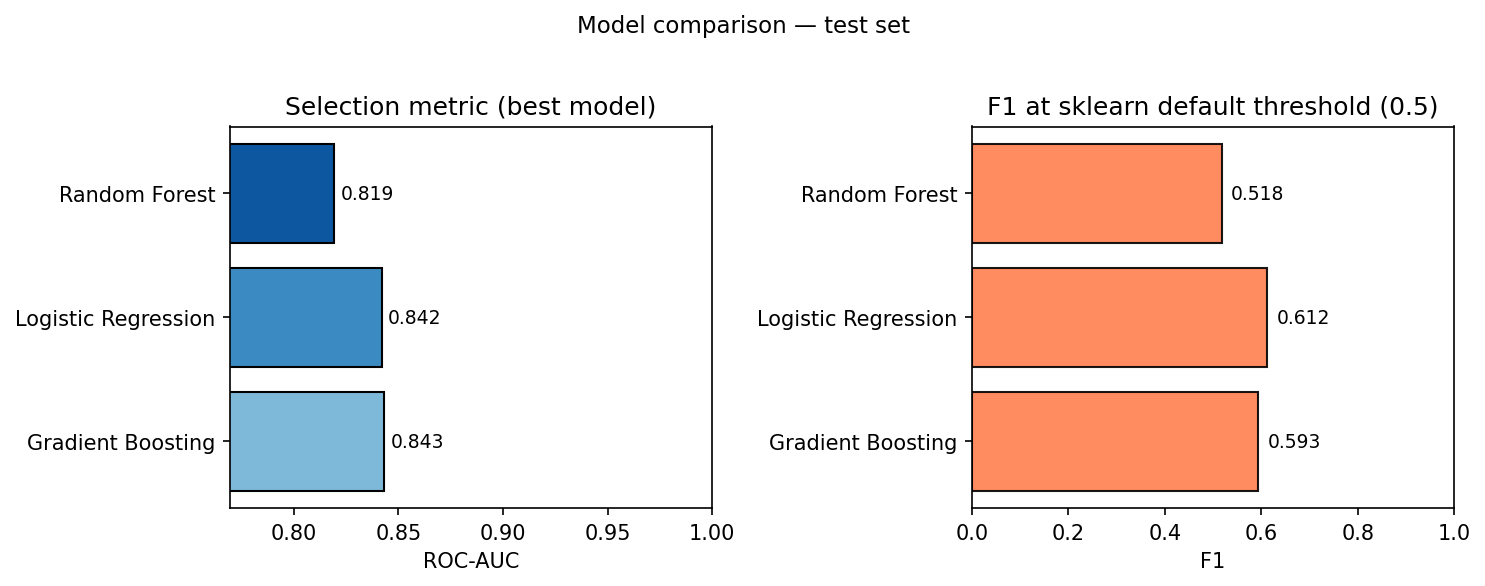

Hold-out test metrics

Best model by test ROC-AUC. F1 @ 0.5; live API uses F1-tuned threshold.

Key findings

From EDA and modeling — notebooks in the repo.

- Class balance. ~27% churn; stratified split.

- Who churns. Month-to-month and shorter tenure dominate.

- Models. Three compared; API serves ROC-AUC winner.

- Threshold. F1-tuned on the test set — not fixed 0.5.

python scripts/generate_figures.py, copy

reports/figures/model_comparison.png → vercel_demo/public/model_comparison.png.

Notebooks on GitHub — 01 EDA, 02 features, 03 models, 04 threshold.

Limitations

- Dataset. Public teaching data — not production distribution.

- Churn definition. Snapshot label; real goals may need different cutoffs.

- Ops. No retraining, drift, or monitoring in this demo.

Training code, EDA, model comparison, threshold analysis, and FastAPI source.